Early this year, I wrote ‘NWO – the impact factor paradox‘, about the actual and perceived role of the impact factor in NWO grant applications, and the intentions of NWO to consider novel ideas to organize research assessment for funding distribution.

The announced national working conference and international conference on such ex ante research assessment have since taken place (I participated in the former). Last week, NWO made public which concrete measures it plans to take in this area (full report currently in Dutch only (pdf)). As stated in the report, the proposed measures have been approved by the council of university rectors, organized in the VSNU.

Below, I will go over what to me personally are the most salient aspects of the report, not only in the context of reducing the burden of applications and peer review (which is what NWO focused on), but also in light of research assessment in general and the implications for open science in particular.

REDUCING THE BURDEN OF APPLICATIONS AND PEER REVIEW

In the report as well as in the process leading up to it (including the two stakeholder conferences), the main problem to address has consistently been framed as that of an increasing burden on researchers to apply for funding, with an accompanying increase in workload for peer reviewers.

Consequently, most proposed measures aim to remedy this issue specifically – e.g. by postponing calls until enough funds are available to guarantee a minimum rate of acceptance (Fig. 1), stimulating universities to play an active role in guiding researchers’ decisions to apply for NWO funding instruments, and make decisions on tenure less contingent on the receipt of NWO-funding.

Fig 1. Acceptance rate

POSSIBLY MAYBE

There are also some potential measures that NWO decided require further investigation. In two cases, NWO promises to allocate specific resources to such investigations:

- SOFA (Self-organized funding allocation)

With the SOFA model, researchers would receive baseline non-competitive funding, with the stipulation that they distribute part of that funding among other researchers (see link for more details). While this model will not at this time be implemented, or experimented with on a small scale, NWO has committed to financing ‘a couple of’ PhD-students to further analyze the model and investigate whether it could be fit for use (Fig. 2).

Fig 2. SOFA model

In this light, it is also interesting to note the recent investigation, published in PLOS One, on the potential effects of baseline non-competitive funding (in this case without further redistribution) in the Dutch system, and the commentary of NWO, (Dutch only) challenging the assumptions and number used in the paper.

- Preselection based on CV

Another measure that NWO plans to consider (esp. for the Innovational Research Incentives Scheme consisting of the personal Veni, Vidi and Vici grants) is to preselect applications based on CV only (Fig. 3). The expectation is that this would result in “a considerable reduction in efforts (…), with the chance that excellent proposals are missed being very small” (pdf p. 11). This assumption is based on the observation (within the domain of Social Sciences and Humanities) that “only 8% of Veni-grants are awarded to the lowest 40% in terms of CV (only 2% to the ‘worst’ [sic] 30%)“.

Choice of language apart, I see three red flags with this measure and the underlying assumption:

- The percentages given might speak as much about the weight currently given to applicants’ CVs as about the content of the research proposals from these applicants. Only by blinding assessors on CVs can a true estimate be made of how many ‘excellent proposals’ would be missed by relying on CV info only.

- Relying on CVs to assess eligibility for submitting a proposal would further enhance the Matthew effect the report rightly warns against in the discussion on other measures.

- With this measure, the criteria on which the CV of a researcher is evaluated become of even greater importance. Will evaluation be done (explicitly or implicitly) on the basis of the length of the publication list and the perceived quality of journals and monograph publishers? Or will a more rounded approach be taken, with publications being assessed on their own value, other research output taken into account, and societal impact being considered as well?

Fig 3. Preselection based on CV

DRAWING LOTS – YES OR NO ?

One possible measure that has been discussed during the stakeholder meetings, but that NWO has decided against implementing, is that of drawing lots to decide on funding. NWO will not investigate or experiment with this approach either, but will keep an eye on current experiments in Germany (Volkswagen Stiftung – Experiment!), Denmark (Villum – Experiment*) and New Zealand (Health Research Council – Explorer grants) (Fig. 4).

Fig. 4. No plans for a lottery

Interestingly, in the first NWO stakeholder meeting, which I attended myself, a lottery draw was proposed as a secondary selection mechanism only, to decide between those proposals that all score equal in the eyes of peer reviewers after the regular assessment procedure. Selection between these proposals is often felt as arbitrary, also by the review panel, which is why the idea of a lottery was proposed as more time-efficient and fair in these cases.

The proposals referenced by NWO above go much further: first, a check of proposals on basic quality criteria is done in a double-blind procedure (so on content only, not relying on CV). Subsequent selection of proposals is then done by lottery. Thus in these models, lottery is really intended to replace, not complement, selection on ‘excellence’ by peer review.

(*NB As far as I could ascertain, for the Villum Experiment grants, no lottery element is included; this assessment scheme only includes the double-blind peer review aspect)

Such experiments can also offer important insights into various aspects of grant assessment, such as the Matthew effect, the effect of conscious and unconscious bias of reviewers, and the difference over time in results of research selected on ‘excellence’ or on basic quality criteria only. Thus, they will contribute to more evidence-based research assessment. In the report, developing such evidence is mentioned as something NWO vows to take an active role in (pdf, p. 12) (Fig. 5).

Fig. 5. Supporting evidence-based assessment

RESEARCH ASSESSMENT: A QUESTION OF EXCELLENCE

Throughout the report, NWO stresses that while it aims to reduce the burden of applications and peer review, its goal remains to select the most ‘excellent’ proposals (e.g. see Fig. 4 above). While this reduces the problem to a manageable level, it also sidesteps the bigger question of what is good research and how best to stimulate that.

As has been argued elsewhere, e.g. in the paper “Excellence R Us” (Moore, Neylon, Eve et al., 2016), the focus on ‘excellence’ in research assessment stimulates hypercompetition and homophily (= same begets same). The resulting push to publish is not always conducive to reproducible research or to the advance of knowledge through collaboration.

This is why I think that the most interesting parts of the NWO report are proposals that will not (yet) be implemented: SOFA, double-blind peer review of proposals, allocation of at least some funding by lottery after an initial check for quality. These all have the potential to shift the current tenets of research assessment away from competing for excellence only.

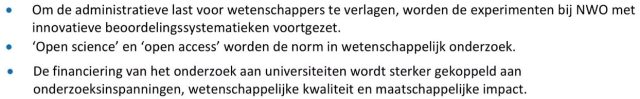

Together with measures that stimulate reproducible research (funding replication studies and both requesting and awarding pre-registrations, sharing methods and data and open access publication of all research output), this would create a culture where sound research is rewarded and collaboration and (re)use of research findings is encouraged. This is also in line with the ambitions of NWO as signatory to the National Plan Open Science (NPOS), and with the ambitions of the new Dutch government as outlined in the coalition agreement that was published last week (Fig. 6).

Fig 6. Quotes from Dutch government coalition agreement, published Oct 10, 2017

With the coalition agreement, NPOS and this report on funding assessment measures on the table, it will be interesting and promising to follow developments in NWO policy over the coming months.

[edited to add: Also listen to the Oct 14 episode of the Dutch investigative reporting radio program Argos where the NWO funding allocation measures are discussed]